Comparing Extractive vs Generative Summarization

Last Edited July 2023NOTE: This was written in July 2023 using Llama 1 when the hallucination gap between an LLM and BERT was much larger. For 100% precision BERT is still the answer but LLMs have advanced enough to the point, that in 2025 they are sufficient for most purposes.

Why use extractive summarization when generative AI gives it more flavor?

One word; Fidelity.

The very nature of generative AI is to ‘create’ but when you’re trying to summarize a legal document or the transcripts of a public meeting creativity can be extremely unhelpful.

Even something that’s accurate 90% of the time is still unhelpful because that 10% throws the rest into doubt and gives the user a justified sense of unease that they must always be checking what they are reading.

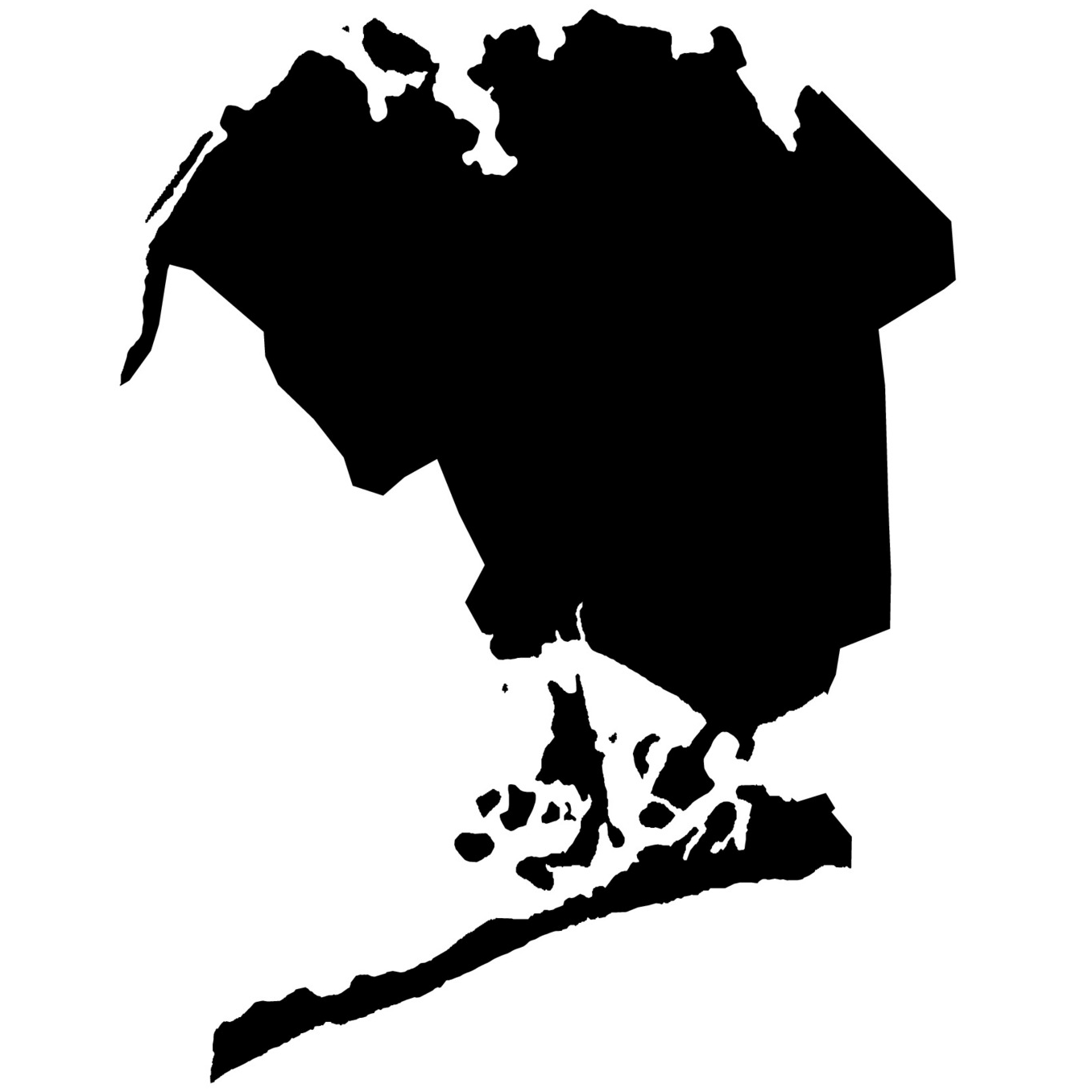

For example when asked to summarize an NYC Council transcript discussing granting a developer the rights to build a 40 story tower, LLAMA invented a fictitious story about the ground floor tennant supermarket being a family business.

It reads perfectly. “Quote from transcript, A family business.”

Except nowhere in the actual conversation did the words family even come up.

This presents a troubling issue

A Possible Solution in the form of UI

After debating extractive vs. generative I settled on the former for the reasons described in the read above but I wasn’t yet ready to let go of generative-summary entirely.

There is just something so appealing about a creative writer that I decided I would include generative AI summaries but rather than just mix them in with the other un-edited content, I would denote what’s AI generated with syntax color highlighting.

I decided blue highlighting would denote generative-summary and no highlighting would convey that it's unedited.

Users are already able to see where the extractive-summary content comes from by clicking any sentence to see it in its original context.